Meta AI's Private Chat Mode: A New Era of Confidential AI Interaction Amid Legal Challenges

In a groundbreaking move for privacy-conscious users, Meta has introduced an "incognito chat" feature for its AI assistant across the Meta AI app and WhatsApp. This development arrives as OpenAI faces lawsuits over user chat logs, raising critical questions about data retention and safety. Below, we explore the key aspects of this new mode through a series of detailed questions and answers.

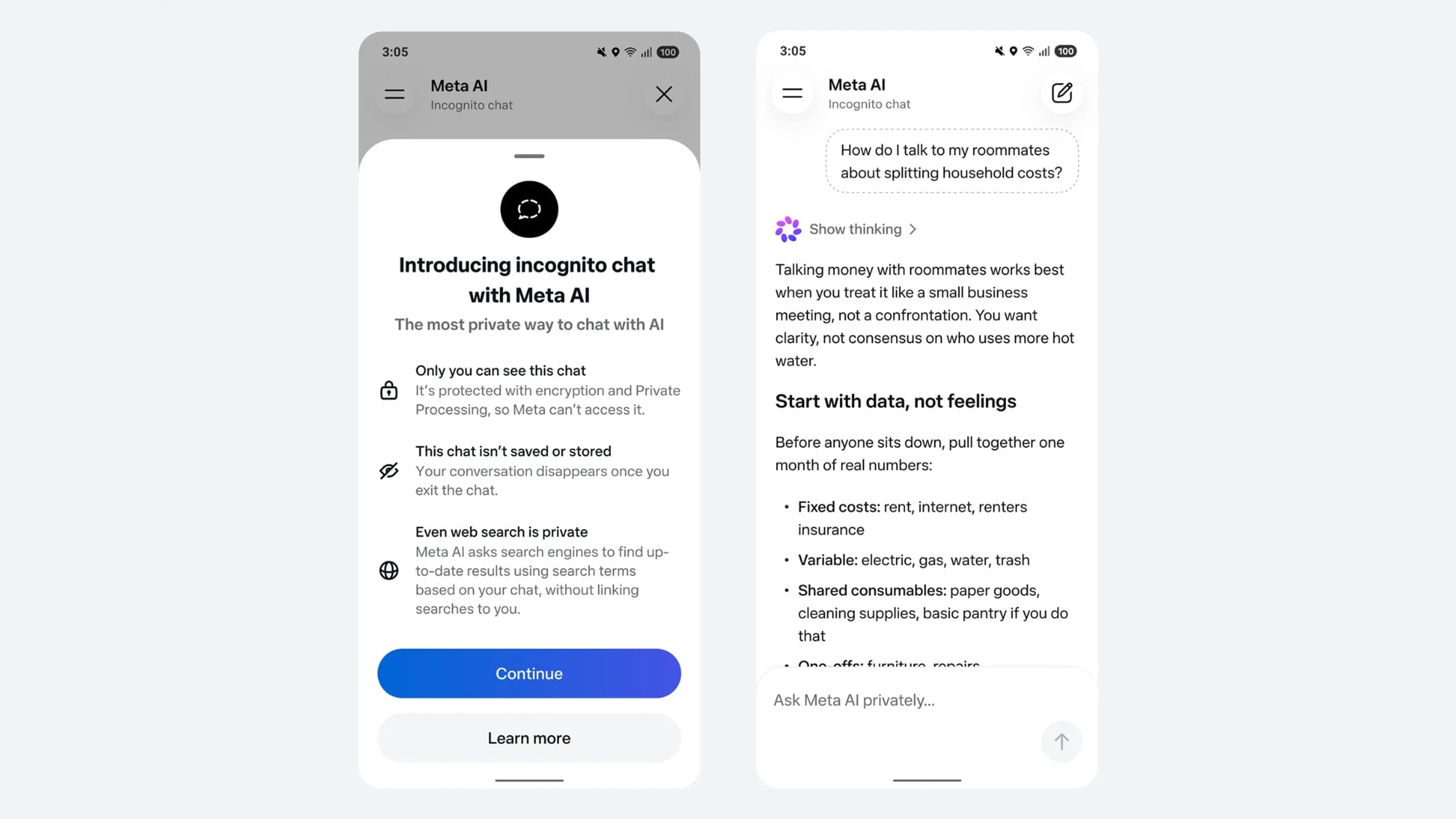

What Exactly Is Meta AI's Incognito Chat Mode?

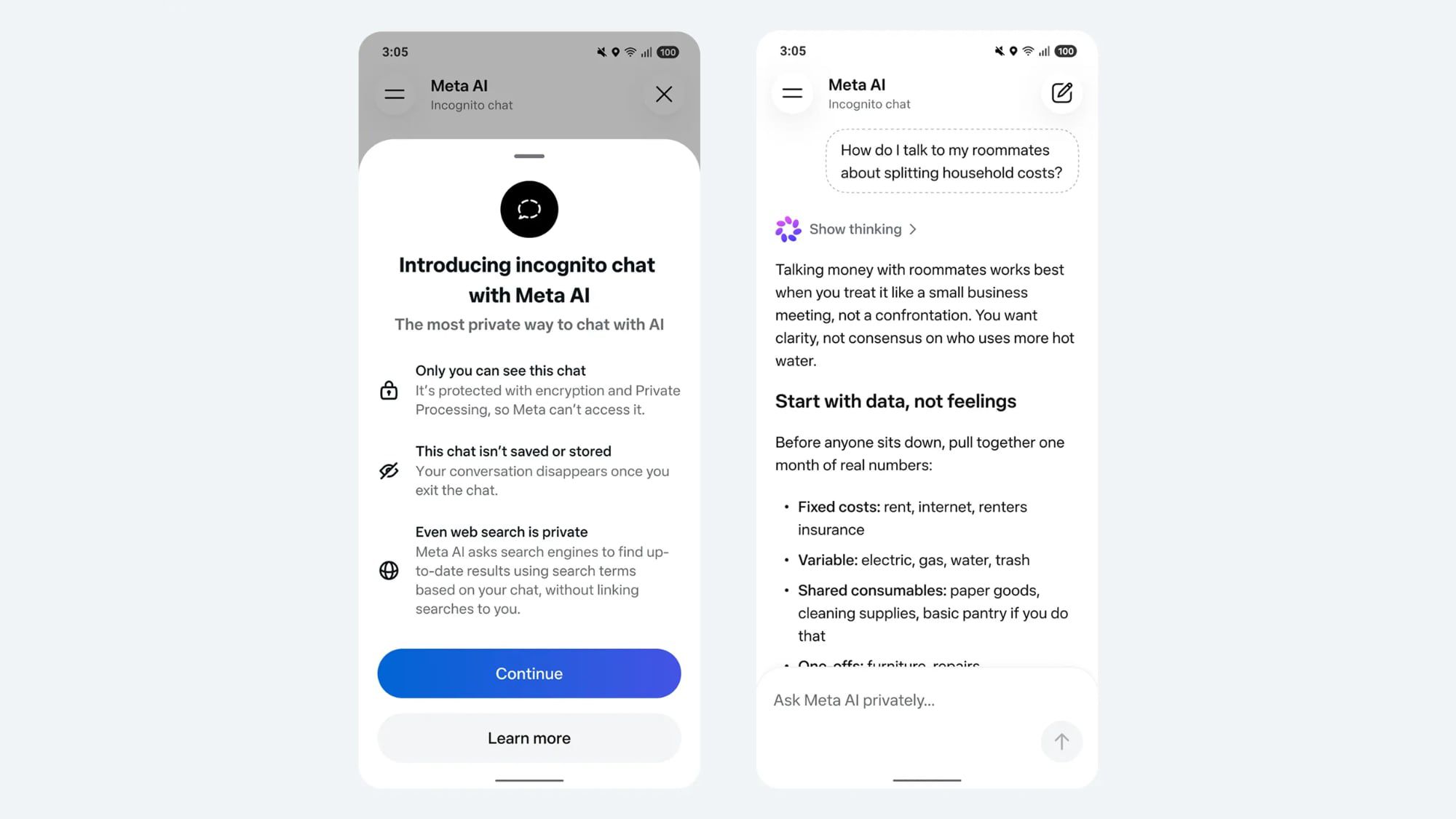

Incognito chat is a new, fully private way to interact with Meta AI. As announced by CEO Mark Zuckerberg, it promises that no conversation logs are stored on servers—a first for a major AI product. The mode uses a Trusted Execution Environment (TEE) to perform AI inference, meaning even Meta itself cannot access the conversation data. When you exit a chat session, all messages disappear from your device, and nothing is saved or logged. Web searches conducted within incognito chat are also private, with no search information linked to your identity. This level of confidentiality is likened to end-to-end encryption, ensuring that only you and the AI can view the exchange. The feature is currently rolling out to the Meta AI app and WhatsApp over the coming months, positioning Meta as a leader in privacy-focused AI.

Why Is Meta Introducing This Feature Now, Given the OpenAI Lawsuits?

The timing is no coincidence. OpenAI is embroiled in multiple lawsuits where stored chat logs have been used as evidence—for example, in cases alleging that ChatGPT provided incorrect medical advice leading to a teen's overdose and other tragic outcomes. Without these logs, plaintiffs would have far less evidence to support their claims. Meta anticipates that as AI becomes more integrated into daily life, users will need to discuss sensitive topics without fear of their data being exposed or used against them. By offering a private chat option, Meta addresses growing concerns about data retention and legal liability. Zuckerberg emphasized that "to get the most from personal superintelligence, we'll all need ways to discuss sensitive topics in ways that no one else can access." This preemptive move may also shield Meta from similar lawsuits in the future.

How Does This Compare with Privacy Options from Google and OpenAI?

Both Google and OpenAI offer temporary chat options, but they fall short of Meta's promise. Google keeps data for up to three days, and OpenAI retains logs for 30 days—even in their temporary modes. In contrast, Meta's incognito chat stores no logs at all, and conversations are deleted from devices upon exit. The key differentiator is the use of a Trusted Execution Environment (TEE), which isolates AI processing from Meta's own infrastructure, ensuring that even the company cannot peer into chats. For users concerned about permanent records, this is a significant upgrade. However, it's worth noting that incognito mode currently supports only text—no image uploads—and includes safety guardrails to refuse harmful or illegal queries. While not as feature-rich as standard chat, the trade-off is absolute privacy.

What Safety Guardrails Are in Place for Incognito Chat?

Despite the emphasis on privacy, incognito chat is not a free-for-all. According to WhatsApp head Will Cathcart, the AI has built-in safety guardrails that prevent it from answering questions deemed harmful or illegal. If a user asks about drug interactions or self-harm, the AI will refuse and steer the conversation in a different direction. This is crucial given the backdrop of lawsuits where ChatGPT allegedly provided dangerous advice. The guardrails operate within the TEE, ensuring that even the moderation process is private. Users cannot upload images in this mode, likely to prevent harmful visual content from being processed. These measures strike a balance between confidentiality and responsibility, aiming to protect users without logging their queries.

What Are the Implications for Users and the AI Industry?

For users, incognito chat offers a safe space to explore sensitive topics—mental health, legal advice, personal dilemmas—without leaving a digital footprint. This could reduce hesitation to use AI for confidential matters. For the industry, Meta is setting a precedent that may force competitors to revisit their data retention policies. If incognito chat becomes popular, other AI providers might need to adopt similar technologies like TEEs to remain competitive. However, the trade-off is limited functionality (text only) and potential challenges in abuse detection. The legal landscape is also shifting: as seen with OpenAI's lawsuits, chat logs can be both a liability and a necessity for proving harm. Meta's approach might reduce legal exposure but could also complicate efforts to investigate misuse. Overall, this feature signals a major step toward privacy-first AI design.

When Will Incognito Chat Be Available, and on Which Platforms?

Meta's incognito chat is rolling out over the coming months, first on the Meta AI app and WhatsApp. Initially, it will support only text-based conversations—no images or file uploads. The feature is expected to be available globally once fully deployed. Users will find it as an option within the AI assistant interface, similar to how browsers offer incognito windows. Given Meta's user base, millions could gain access to truly private AI interactions. The rollout is gradual, suggesting Meta is carefully managing infrastructure demands of the TEE. For now, those eager to test the feature should watch for updates in WhatsApp or the Meta AI app. As competition heats up, we may see other platforms accelerate their own privacy-focused offerings.

Related Articles

- Section 702 Renewal Threatens 'Turnkey Totalitarian State,' Whistleblower Warns

- Apple vs. India's Antitrust Regulator: A Battle Over Financial Data and Jurisdiction

- How to Demand Real FISA 702 Reforms: A Citizen's Action Guide

- Avoiding Algorithmic Overreach: A Tutorial on Proper Grant Evaluation from the DOGE Ruling

- Exploring the OpenAI Smartphone Buzz: Our Take on 9to5Mac Daily's Top Stories

- GM to Pay $12.75 Million Settlement for Selling Driver Data Without Consent

- Emergency Privacy Push: Guy Kawasaki and EFF Release Free Signal Guide Amid Surveillance Fears

- Azure IaaS Security: How Layered Defense and Secure Principles Protect Your Cloud Infrastructure