A Step-by-Step Guide for UK Policymakers: Addressing Online Harm Without Breaking the Web

Introduction

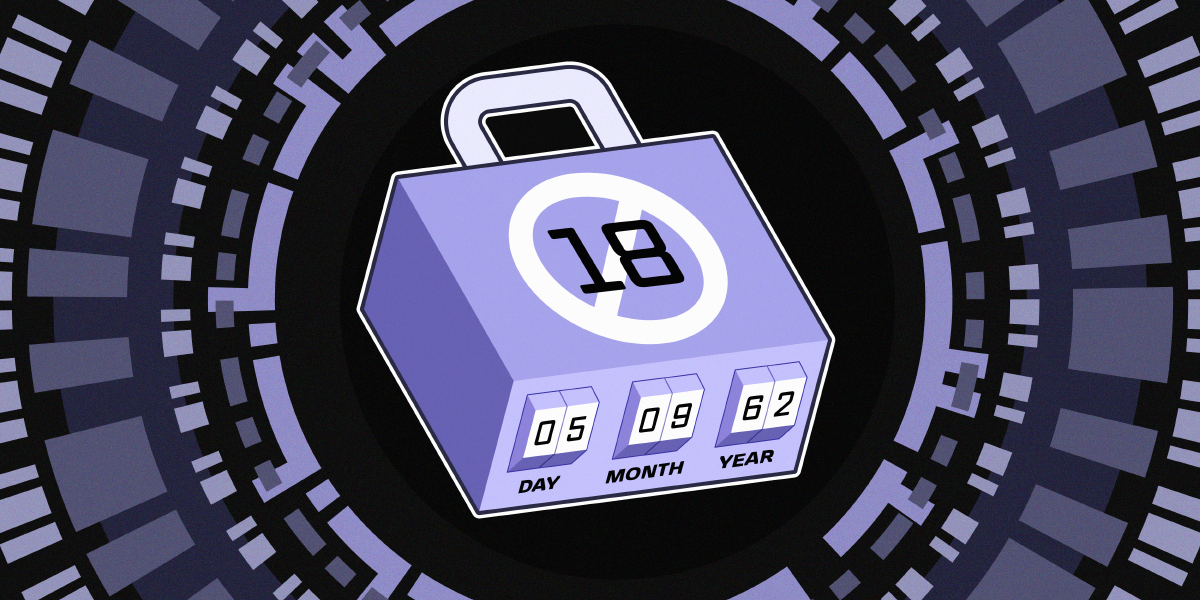

Protecting children and all users online is a critical policy goal, but the path to achieving it requires careful navigation. The Electronic Frontier Foundation (EFF), along with 18 organizations including Mozilla, the Tor Project, and the Open Rights Group, has urged UK policymakers to rethink their approach. Instead of imposing sweeping age-gating and access restrictions that undermine the open internet, they advocate for targeting the root causes of online harm. This step-by-step guide translates their key recommendations into actionable steps for policymakers, privacy advocates, and anyone working to shape digital regulation. By following these steps, you can craft policies that genuinely reduce harm while preserving the internet’s openness, interoperability, and accessibility.

What You Need

- Understanding of the current UK legislative landscape, particularly the Children’s Wellbeing and Schools Bill and related proposals.

- Knowledge of the coalition’s joint letter (EFF, Mozilla, Tor Project, Open Rights Group, and 15 other organizations) and its core arguments.

- Familiarity with age assurance technologies, their accuracy limitations, and privacy implications.

- Awareness of systemic platform design flaws (e.g., engagement-maximizing algorithms, data collection for targeted advertising).

- Commitment to preserving the open architecture of the internet (interoperability, accessibility, anonymity).

- Access to impact assessments and consultation with civil society, youth groups, and technical experts.

- Tools for drafting rights-respecting policy language (e.g., privacy impact assessment templates, international best practices).

Step-by-Step Guide

-

Step 1: Recognize the Limitations of Blanket Age-Gating and Age Assurance

Before writing any new regulation, acknowledge that mandatory age assurance technologies are often inaccurate or privacy-invasive—sometimes both. The coalition letter warns that requiring such systems across social media, video games, VPNs, and even basic websites forces all users to verify identity just to access the web. This creates expanded surveillance, data breach risks, and undermines anonymity. Instead of defaulting to age gates, consider them only as a last resort for high-risk services, and always with strong data protection safeguards.

-

Step 2: Shift Focus from Access Restrictions to Platform Accountability

The letter emphasizes that many digital platforms are designed to maximize engagement and profit through pervasive data collection and targeted advertising, often at the expense of user safety. Rather than banning access, policymakers should hold companies accountable for these systemic practices. Require transparency around algorithmic amplification, limit addictive design patterns, and impose duty of care obligations that address the business models driving harm.

-

Step 3: Address the Root Drivers of Online Harm

Proposed measures like age-gating miss the point: they don’t tackle the underlying drivers. The coalition calls for policies that curb exploitative data collection, eliminate manipulative dark patterns, and ensure platforms prioritize user rights by design. For example, mandate privacy-by-default settings, restrict the use of children’s data for targeting, and require independent audits of harmful content propagation. This step directly reduces harm without fragmenting the web.

-

Step 4: Preserve the Open Architecture of the Internet

Age-gating at scale could splinter the web into restricted jurisdictions, limit access to information, and entrench gatekeepers like app stores and platform ecosystems. Policymakers must protect the qualities that made the internet a global public resource—interoperability, accessibility, and openness. Ensure any new rules do not inadvertently create barriers to entry for small services or force users into walled gardens. The letter warns that such fragmentation weakens the internet’s core value.

-

Step 5: Protect Anonymity and Data Privacy

Mandatory identity verification erases the ability to browse anonymously, which is essential for many users, including vulnerable individuals seeking support or information. The coalition stresses that these policies risk expanding surveillance and enabling data breaches. Instead, design regulations that respect privacy by limiting data collection, requiring least-privilege access, and allowing pseudonymous or anonymous participation where feasible. Anonymity itself is a harm-reduction tool.

Source: www.eff.org -

Step 6: Empower Young People’s Access to Vital Resources

The internet remains a vital space for young people—offering information, support networks, and expression opportunities that may not exist offline. Policies that restrict access risk cutting off these lifelines without meaningfully reducing harm. When drafting rules, explicitly preserve and enhance youth access to educational content, mental health resources, and community forums. Involve youth in policy design to ensure their needs are met.

-

Step 7: Build Coalitions and Learn from International Best Practices

The coalition behind the letter includes global leaders in digital rights. Policymakers should engage with civil society, technical experts, and international peers to craft balanced solutions. Study frameworks like the EU’s Digital Services Act, which focuses on systemic risk management rather than blanket bans. Use the expertise of organizations like EFF, Mozilla, and Tor to test proposed age assurance technologies and evaluate their true impact.

-

Step 8: Integrate User Rights by Design from the Start

Rather than retrofitting privacy and safety, build them into every stage of policy development. Conduct privacy impact assessments, include mandatory data protection by design and default, and require independent oversight. The coalition argues that user rights should not be an afterthought but a foundational principle. This ensures that policies are both effective and respectful of fundamental freedoms.

Tips for Effective Implementation

- Engage directly with youth and marginalized communities to understand how restrictions affect their online lives—their insights are invaluable.

- Avoid one-size-fits-all solutions: not all services pose the same risk; prioritize interventions based on risk assessment.

- Test age assurance technologies rigorously for accuracy, bias, and privacy impact before mandating their use. Many fail on all three.

- Remember the original letter’s key message: protecting users online requires more than heavy-handed restrictions. It demands thoughtful, rights-respecting policies that tackle the business models and design choices driving harm, while preserving the open, global nature of the web.

- Iterate and adapt: technology and harm profiles evolve; regulations should include periodic review and sunset clauses.

Related Articles

- Inside Python 3.15.0 Alpha 2: Key Features and Release Insights

- How to Contribute to the Future of Go: A Guide to the 2025 Developer Survey

- Mastering IntelliJ IDEA: Key Techniques and Workflows

- Configuration Safety at Scale: Canary Rollouts and Blameless Reviews

- Configuring Scalar API Reference in ASP.NET Core

- Temporal Proposal Aims to Fix JavaScript's Infamous Date Problems

- Go 1.26 Unleashes Source-Level Inliner: A Game-Changer for Automated Code Modernization

- Python Insider Blog Embraces Git-Based Workflow with New Home